If you have ever wondered how Google “sees” your brand, your company, or even yourself as an individual, this post will give you the diagnostic tools to find out. The shift from keyword matching to entity understanding is the most consequential change in search technology of the past decade, and it has only accelerated with LLMs entering the picture.

This is not a theoretical conversation. It is the foundation of how search engines, LLMs, and AI systems interpret content across the web today. By the end of this article, you will understand what entities are, how Google processes them through its Knowledge Graph, how to interrogate that graph yourself, and how to start applying the same diagnostic tools that AI systems rely on.

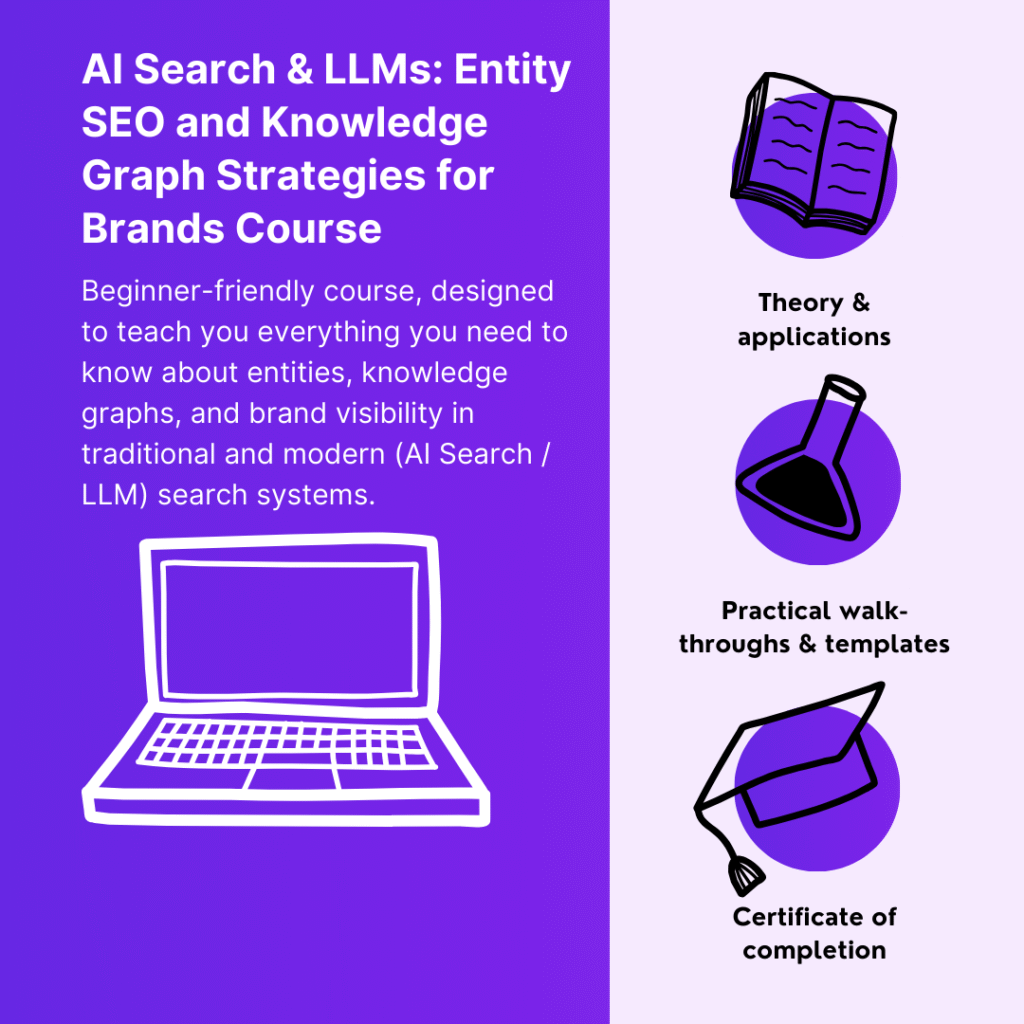

The aim is to give you the conceptual grounding plus two practical diagnostic tools you can apply immediately. The full operational system — the BRIDGE framework, schema-level implementation, LLM citation tracking, the brand entity development workflow — is covered in depth in the AI Search & LLMs: Entity SEO and Knowledge Graph Strategies for Brands course.

From keywords to entities to conversational intelligence

Search has moved from matching keywords, to understanding meaning, to anticipating user needs. The transitions matter.

In the keyword era, search engines matched literal text. They counted how often words appeared on pages, and SEO meant keyword density optimisation. Engines had limited context understanding and operated mostly at the page level.

The entity era changed this. Google’s Knowledge Graph, launched in 2012, introduced semantic relationships and real-world object recognition. The system finally understood that Apple the company is different from Apple the fruit. Search engines began integrating billions of entities and hundreds of billions of facts, powering knowledge panels and rich results through structured data.

We are now in the LLM era. Conversational search. LLMs understand multi-layer entity relationships, predict user intent, and provide zero-click answers directly in AI Overviews and AI Mode. They capture context and nuance that simple keyword matching cannot, which makes them more effective at providing comprehensive, cited answers.

What is critical about all of this: content now needs to be optimised not just to rank, but to be understood and cited by AI systems. The formula is straightforward.

Entity clarity + semantic match + verifiable facts = AI citation

This is the new visibility equation, and the rest of this post unpacks how to apply it.

What entities actually are (and how they differ from keywords)

Entities are real-world things, not just keywords. This distinction is more critical than it sounds. Entities possess properties, relationships, and context that are intrinsic to the concept itself. Keywords alone do not.

There are six primary entity categories worth knowing:

- People — individuals, leaders, authors, experts

- Places — locations, regions, addresses

- Organisations — companies, institutions, brands

- Products — software, services, physical goods

- Concepts — ideas, methodologies, topics

- Events — conferences, launches, time-bound occurrences

Each of these entities has attributes and properties that enable connections between every other entity type. A concrete example makes this clearer.

“Tim Cook, CEO of Apple, launches new iPhone at flagship New York store.”

This sentence is a small constellation of entities: a person entity (Tim Cook), an organisation entity (Apple), a product entity (iPhone), and a place entity (New York). That is entity comprehension, and it is fundamentally different from a simple text-matching exercise. Tim Cook is not a string of characters. He is a person, with a role, a relationship to an organisation, and a history of actions.

If you want to dive deeper into the underlying model that connects entities, attributes, and the values they take, the EAV model is a useful companion read.

How Google’s Knowledge Graph stores entity understanding

Google’s Knowledge Graph stores hundreds of billions of verifiable statements about how entities connect to each other. These facts map billions of entities across the categories we just saw, and each entity has a unique identifier in Google’s system.

When you search for “Apple,” Google’s Knowledge Graph disambiguates whether you mean the company, the fruit, or Apple Records, based on context. This infrastructure now powers everything you see in modern search: rich snippets, knowledge panels, AI Overviews, and AI Mode.

The Knowledge Graph was revolutionary because it transformed search from keywords to understanding. The same infrastructure is what now enables LLMs to cite sources with confidence.

The practical question then becomes: how do we interrogate Google’s graph to see the web through its eyes? How do we know whether our company, our brand, or we ourselves are entities in the graph?

Interrogating Google’s Knowledge Graph with the API

The Knowledge Graph Search API lets you query the graph directly and see exactly what Google knows about an entity. This is a concrete API response, not abstract signal. You can verify how Google already interprets your brand, your CEO, your products.

When you query an entity, Google returns structured JSON-LD data. Take Jane Austen as an example. The response gives you:

The entity name and description. “Jane Austen, English novelist.” This is the primary identification Google uses for semantic understanding. If your brand’s description is vague, inaccurate, or misaligned with how you would describe yourself, this is an early signal that the entity is not being represented as you would expect.

A resistant ID. Every well-established entity gets a unique Knowledge Graph identifier. The presence of this ID indicates the entity exists as a recognised node in Google’s graph and is established enough to be referenced consistently. For newer brands or less prominent individuals, this identifier may be missing or weakly associated.

The semantic type. Jane Austen is classified as both Person and Thing. This hierarchical classification enables proper entity relationship modelling and inheritance. It allows Google to know that she shares characteristics with all people while also being a distinct individual. Hierarchical typing is foundational to how entities are interpreted at scale.

A detailed description. Article body, licence information, source URLs — rich contextual information that anchors the entity in real-world references.

The confidence score. This is the metric that is critical for us. In Jane Austen’s case, the score is 11,000. Anything above 1,000 typically represents a well-established, unambiguous entity. For comparison, a confidence score under 100 is typical for a new brand and indicates the entity is not fully recognised in the graph.

This API is essentially a diagnostic tool for entity health — a way to see exactly how Google is looking at you. If your brand returns a low confidence score (or no match at all), that is not vague signal. It is concrete evidence that entity recognition is weak and points to specific work to do.

Extracting entities from your content with Natural Language analysis

The Knowledge Graph API tells you how Google represents an entity it already knows. The second diagnostic question is equally important: what entities does Google extract from your content, and which ones does it treat as central?

This is where Google’s Natural Language API comes in. You take a chunk of text — the first paragraph of your site, your about page, your homepage hero copy — plug it into the analysis, and the API breaks it down into the different entities it identifies.

For a Jane Austen page, the API would return something like:

- Jane Austen — Person, high salience

- English novelist — supporting term, medium salience

- Pride and Prejudice — Work, medium salience

- novel — Concept, medium salience

- world, 19th century — low salience

The most important piece of data here is salience. Salience represents the importance of each entity within the analysed text context. Scores range from 0 to 1, and this metric tells you what Google’s Natural Language API identifies as your page’s primary focus.

Salience above 0.5 is interpreted as the primary content topic. In the Jane Austen example, she has high salience, which means she is correctly identified as the primary entity. She has a proper noun mention, is classified as Person (correctly), and has a Knowledge Graph ID. Everything checks out.

Medium salience entities (0.5 to 0.2) are supporting topics, secondary to the main entity. English novelist and Pride and Prejudice fall here — contextual reinforcement of who Jane is, but not the primary topic of the page.

Low salience entities (below 0.2) carry little semantic weight. Words like world and 19th century are detected but have no meaningful role in understanding the page’s purpose. You might even question whether these should be retrieved at all because they bring little contextual value.

Why does this matter for SEO? Because if your brand name has low salience on your own page, then Google is not recognising it as the primary topic. If navigation elements or random words show higher salience than your core entities, your content is lacking focus on what matters most.

Running entity analysis on your own pages is the easy part. Turning the findings into a structured optimisation system is where most teams stall. The AI Search & LLMs course is built around exactly this — the BRIDGE framework that walks you from audit to execution.

How LLMs process entities (and why training inputs matter)

When large language models process text during training, they extract different categories of entity-related features. Understanding these helps explain why some content gets cited by LLMs and other content does not.

- Position mapping. The exact character position within a text that enables entity-text alignment. This is the lowest level of how systems anchor entities to specific locations in content.

- Mention classification. Proper mentions (Jane Austen) versus common mentions (the novelist). LLMs distinguish between these and learn to maintain entity consistency across both.

- Multi-mention entity tracking. Jane Austen can appear in the same text as Austen, she, or the author. Models learn to recognise that all of these refer to the same entity. This is one reason why pronouns and shortened references work fine for human readers but can dilute entity signal if they dominate your content.

- Salience-based filtering. Importance scores are assigned to entities based on their relevance to the overall content — the same salience we just saw in the NLP API output, applied during training at scale.

- Metadata linking to the Knowledge Graph. Entities get linked to external knowledge sources, which is what enables LLMs to cite content with confidence. If your entities are not linked to the Knowledge Graph, the systems have fewer reference points to verify your content against.

- Type hierarchy. Models need to correctly understand whether a given entity is a person, a work of art, a location, an organisation, an event, and so on. The hierarchical typing we saw in the Knowledge Graph response is what makes this possible.

These are training inputs that shape how LLMs understand and process entities in chunks of text. They directly affect whether your content gets cited when someone asks an AI assistant a question relevant to your space.

What this means for your content

The shift toward entity understanding changes what optimisation looks like. It becomes less about surface-level term usage and more about whether your pages clearly express who and what they are about, reinforce the correct entity types, and reduce ambiguity so systems can confidently associate your brand, people, and products with recognised entities in the graph.

A few principles that follow directly from the diagnostics we just walked through:

- Use complete and unambiguous entity names. Do not rely on pronouns or shortened references in places where entity recognition matters. LLMs excel at entity understanding, but they prefer structured, relationship-rich content. Your H1 and your first 100 words are particularly important — make sure they prominently state the primary entity.

- Focus on entity authority. The goal is to become the definitive source of information for your core entities. This is what makes you cite-worthy when an AI system is composing a response that references those entities.

- Map every entity relationship. Document how you connect to others in your industry — partners, integrations, competitors, customers. Connected entities accumulate authority; isolated entities do not.

- Make your content fact-checkable. Include specific, verifiable details. AI systems reward content that contains concrete, citable facts over content that traffics in vague claims.

A formula worth remembering

The simplest summary of what entity-based search in the LLM era actually rewards is the formula we saw earlier:

Entity clarity + semantic match + verifiable facts = AI citation

The goal is not to game the algorithm. It is to become the source that both people and machines trust most. LLMs do not just cite whatever ranks highest. They cite content that is authoritative, unambiguous, and fact-rich. When your page clearly defines what it is about and which entities matter, AI systems can understand and reference your work with confidence.

Smaller brands can outperform bigger ones simply by offering clearer, more complete entity coverage than their competitors. The opportunity is genuinely open.

Continue your learning (MLforSEO)

This post covered what entities are, how Google’s Knowledge Graph stores them, how to use the Knowledge Graph Search API and the Natural Language API as diagnostic tools, and the core principles for content that AI systems can confidently cite. The full implementation — including the BRIDGE framework for systematic entity development, schema markup for multi-entity relationships, the cross-platform distribution playbook, LLM citation monitoring, and a live brand audit masterclass — is in the AI Search & LLMs: Entity SEO and Knowledge Graph Strategies for Brands course on MLforSEO.

When you enrol, you also get access to the dedicated course channel inside the MLforSEO Slack community, where Beatrice Gamba and Lazarina Stoy answer course-specific questions and discuss ongoing implementation projects with course-takers. That is the best way to get personalised support as you put the BRIDGE framework into practice.

Beatrice Gamba

The future of search and content discovery will be dialogical, personalized and agent-mediated. Digital leaders need to start integrating these concepts in their strategies to be ready for what’s coming.