When you ask an AI assistant a question and it returns a synthesised answer with multiple cited sources, those sources are not arbitrary. The system selected each one because it contributed something distinct to the response. And the combination of cited sources tells you something important: those brands are recognised by the LLM as belonging in the same entity neighbourhood.

This is co-citation, and it is one of the most underappreciated mechanisms for building brand authority in AI search. Brands that consistently appear alongside authoritative entities in LLM responses accumulate authority by association. Brands that appear alone, or alongside weaker entities, do not accumulate the same way.

This post covers how co-citation actually works, why it matters more in LLM search than in traditional search, and the practical strategies for building co-citation patterns deliberately.

What co-citation actually is

In academic publishing, co-citation is when two papers are cited together by a third paper. The pattern signals that the two cited papers are recognised as relevant to the same topic, even if they do not reference each other directly. Researchers have used co-citation analysis for decades to map intellectual relationships between authors.

In LLM-driven search, the same pattern emerges. When an AI system synthesises a response and cites multiple sources, those sources are being co-cited. The LLM has implicitly judged them as relevant to the same query, contributing complementary information.

A practical example. Someone asks an AI assistant about email deliverability best practices. The response cites:

- A Mailgun engineering blog post

- An EmailToolTester comparison article

- A Litmus research report

- Your guide on authentication and SPF setup

If your guide is consistently cited alongside Mailgun, Litmus, and EmailToolTester, you are co-cited with the recognised authorities in email infrastructure. The LLM has placed you in that entity neighbourhood. Over time, as the pattern repeats, the system reinforces the association — and your brand accumulates authority by virtue of the company you keep in cited responses.

Why co-citation matters more in LLM search

In traditional search, citation patterns mattered through backlinks. Pages that earned links from authoritative sources gained authority. The signal was direct and observable.

In LLM search, the same dynamic operates at a different layer. LLMs do not “see” your backlinks directly. They see the patterns of how your content is referenced, cited, and discussed across the web — including in AI responses themselves. Co-citation in those responses is a feedback loop that reinforces or weakens your entity positioning over time.

Three specific reasons co-citation has outsized importance.

- It signals entity neighbourhood. When an LLM cites you alongside recognised authorities in your space, it is implicitly classifying you as belonging in that neighbourhood. Recurring co-citation reinforces the classification. Absent co-citation, your brand exists in an entity vacuum where the system has no strong cues about where you belong.

- It distributes authority through proximity. Being cited alongside authoritative entities increases the apparent authority of your own content. The LLM does not give you the authority directly, but the pattern of co-citation makes your entity easier to cite confidently in future responses.

- It compounds with content depth. Brands that have substantive content covering entity relationships (mentioning recognised entities, comparing to alternatives, citing established sources) are more likely to be co-cited because their content provides natural anchors for the co-citation to happen. Brands with thin content miss the opportunity even when they would otherwise qualify.

What drives co-citation outcomes

Several factors influence whether your brand gets co-cited with authoritative entities versus cited alone or co-cited with weaker entities.

Topic specificity. Highly specific topics produce sharper co-citation patterns. A query about “email deliverability for B2B SaaS companies sending more than 100k emails per month” produces a narrower co-citation set than “email tips.” Specific topics reward brands that have specific, niche authority. Generic topics reward brands with general authority.

Content depth and entity coverage. Content that explicitly references recognised entities (named tools, named methodologies, named experts, named standards) is more likely to be co-cited with those entities. Content that talks around entities without naming them misses the co-citation opportunity entirely.

Original contribution. LLMs tend to cite content that contributes distinct information — original research, proprietary data, unique perspectives, specific implementation details. Content that mostly summarises what other sources already say is more likely to be used as grounding context without being cited. Co-citation requires that the system see your content as adding something to the response.

Cross-platform consistency. As covered in the disambiguation work, AI systems triangulate entity information across multiple platforms. Brands with consistent presence across structured platforms (Wikidata, LinkedIn, Crunchbase, industry directories) are easier to confidently include in co-citation patterns. Brands with inconsistent or fragmented presence are harder to cite alongside well-established entities because the system’s confidence in their identity is lower.

Recency and freshness. Recently published or recently updated content has an edge in co-citation because LLMs frequently surface recent sources for time-sensitive queries. A piece that was definitive in 2023 may still be useful, but a piece updated last month is more likely to appear in current AI responses.

How to build co-citation deliberately

Building co-citation is not about gaming the system. It is about deliberately positioning your brand in the entity neighbourhood where you want to be recognised.

Identify your target neighbourhood. Make a list of the authoritative entities in your specific space. For a project management software company, this might be Asana, ClickUp, Monday.com, Jira, plus recognised thought leaders, methodology entities (Agile, Scrum, Kanban), and established review platforms (G2, Capterra). These are the entities you want to be co-cited with.

Reference target entities accurately in your content. Mention them by full name. Describe how they relate to what you are covering. Compare honestly where relevant. Cite their work where appropriate. This is not about trying to manipulate the system — it is about producing content that genuinely engages with the entity neighbourhood you want to be part of.

Create content that addresses the same queries the authoritative entities address. If the established authorities have content on a specific topic, you should consider whether you have content on that topic too — and whether your content offers something distinct (a different perspective, a different methodology, a specific implementation detail, proprietary data).

Build genuine partnership and integration signals. Real partnerships, real integrations, real co-authored content, real podcast cross-appearances. These produce verifiable external signals that AI systems can use to anchor co-citation patterns. A blog post pretending to be partnered with a recognised brand does not produce the same effect as an actual partnership.

Earn third-party mentions alongside target entities. Industry articles that list you alongside the authorities. Comparison articles that include you in the consideration set. Speaker line-ups at conferences where the recognised authorities also speak. Press features that group you with the entities you want to be associated with.

Make your content the kind of content LLMs want to cite. Original data. Specific named entities. Verifiable facts. Clear attribution. Structured content that is retrievable as clean chunks. The same patterns that make content citable in general make it more likely to be co-cited specifically.

How to monitor co-citation

Co-citation is harder to measure than backlinks because the citation happens inside AI responses rather than on indexed pages. A practical monitoring approach:

- Sample target queries regularly. Pick a set of 20-50 queries relevant to your space. Run them through ChatGPT, Perplexity, Claude, and Google AI Mode. Record which sources are cited and which sources are co-cited with each other.

- Build a co-citation matrix over time. Track which brands consistently appear alongside which other brands. The matrix reveals your current positioning and where it is shifting.

- Compare to your target neighbourhood. Are you appearing alongside the authoritative entities you wanted to be associated with? If yes, the pattern is working. If no, either the content is not landing or you are being placed in a different neighbourhood than intended.

- Iterate based on observed patterns. If you are consistently co-cited with weaker entities or appearing alone, that is feedback that your positioning needs work. The fix usually involves more deliberate entity references in content, stronger external positioning, or better cross-platform consistency.

This monitoring is not as clean as traditional SEO measurement, but it gives you observable patterns that inform real strategic decisions over time.

Continue your learning (MLforSEO)

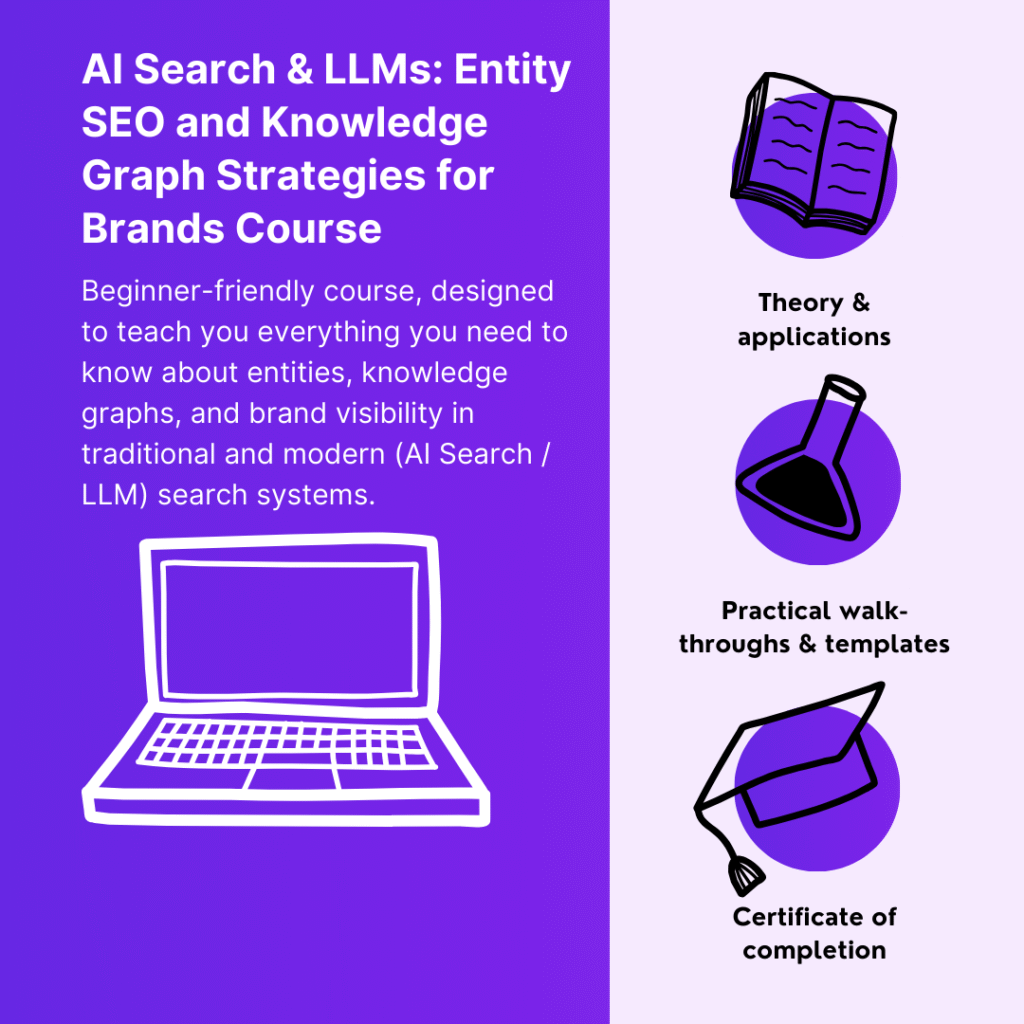

This post covered how co-citation operates in LLM search, why it matters for entity positioning, and the practical strategies for building co-citation patterns deliberately. The full system — including the competitive LLM intelligence workflow, the citation monitoring framework, the reputation engineering patterns, the cross-platform distribution playbook, and the BRIDGE framework that organises authority building across both traditional and AI search — is in the AI Search & LLMs: Entity SEO and Knowledge Graph Strategies for Brands course on MLforSEO.

Enrolling also gets you into the dedicated course channel inside the MLforSEO Slack community, where Beatrice Gamba and Lazarina Stoy answer course-specific questions and discuss ongoing implementation projects with course-takers.

Beatrice Gamba

The future of search and content discovery will be dialogical, personalized and agent-mediated. Digital leaders need to start integrating these concepts in their strategies to be ready for what’s coming.