Understanding why someone searches shapes everything from keyword selection to page structure to which SERP features your content needs to compete with. In this post, we’ll define search intent, work through where the standard categories fall short, look at how engines actually infer intent, and walk through the practical lens for classifying intent at scale in your keyword research.

The aim isn’t to give you a definitions glossary. It’s to give you a working framework — and to flag where the picture changes in AI search, because intent inference is one of the areas where AI systems behave significantly differently from traditional search. The full classification workflow — rule-based classifiers at scale, AutoML approaches to custom intent classifiers, integrating intent labels into a complete semantic keyword universe — is its own substantial body of work covered elsewhere in detail.

The core definition of search intent

At its simplest, search intent is the underlying purpose behind a user’s search query — the goal they hope to achieve when they type something into a search bar. It’s the bridge between language and action, shaping how search engines interpret meaning and how content creators should structure information to meet that need.

Academic research shows that around 75% of search queries can be confidently assigned to a single dominant intent. The remaining 25% display overlapping or hybrid motivations. This overlap is important — intent isn’t a rigid category, it’s a spectrum. Users may begin with one purpose and evolve toward another as their search journey unfolds. Recognising that fluidity helps you design content pathways that align with real search behaviour rather than tidy taxonomies.

Explicit (macro) intent

SEO professionals have historically grouped explicit intents into three broad categories — the macro intents that still underpin most search models:

- Informational — the user seeks to learn, clarify, or understand something. These queries often start with “how,” “what,” “why,” or “when,” and signal curiosity or problem-solving.

- Navigational — the user is trying to reach a specific digital destination. They already know the brand, product, or platform they want and use search as a shortcut.

- Transactional — the user aims to complete an action. Buying, booking, signing up, downloading. These queries imply readiness to convert.

Each macro intent aligns with different stages of the user journey — awareness, consideration, action — and demands distinct content types, page structures, and SERP expectations. Informational queries are typically satisfied by educational articles or videos. Transactional queries demand optimised product or service pages with clear CTAs.

Hybrid intent categories

As search has matured, hybrid intent categories have emerged that blend aspects of the core three. Users no longer search with purely informational, navigational, or transactional goals in isolation — their queries often express mixed motivations that evolve across a single session or even a single query.

Two of the most important hybrid categories:

- Commercial investigation — researching before buying. Reviews, comparisons, “best of” lists. The intent isn’t directly transactional, but it’s directly tied to a transaction the user is considering. Recognising this as a distinct category matters because content that serves commercial investigation looks different from content that serves either informational or transactional intent — and conflating them is a common mistake that produces under-converting content.

- Localised search — combining navigational, informational, and often transactional intent with a geographic component. “Coffee near me,” “best plumber in Sofia,” “Italian restaurants open now.”

Implicit intent: the layer under the query

Explicit categories describe what a user appears to want. They rarely explain why. Implicit intent looks beneath the surface, revealing the underlying — often unspoken — motivations that shape how, when, and why people search.

Unlike explicit intent, which can often be identified from keywords alone, implicit intent depends on contextual and behavioural signals: the device used, the time and place of the search, the user’s emotional state, and the cultural or situational context of the moment.

Every query carries these invisible layers. A search for “best jobs in design” might look informational. For one person, it reflects ambition. For another, it stems from frustration, burnout, or fear of redundancy. Recognising these differences lets SEOs interpret not just the topic behind a query but the human motive driving it.

Emotional and contextual drivers

Implicit intent often shows up through emotional or contextual drivers that shape how users phrase queries and which results they engage with.

- Emotional intent stems from feelings — reassurance, aspiration, pride, fear — none of which need to appear in the literal query text. A user searching “how to recover from website traffic drop” isn’t only seeking data; they’re seeking reassurance that recovery is possible. “Best portfolio website examples” reflects aspirational intent, a desire to improve and belong.

- Contextual intent arises from the situation in which a search occurs. Environmental factors (home, office, commute), device constraints (mobile vs. desktop), urgency cues. “How to remove coffee stains quickly” or “healthy lunch ideas for work” are loaded with contextual signals that influence both content depth and format expectations.

Together, these emotional and contextual dimensions form the texture of a search — the part algorithms try to infer through personalisation and behavioural modelling, and the part SEOs can mirror through empathetic content design.

Common micro-intent patterns

Within implicit intent, smaller and more specific micro-intents emerge. These micro-motivations explain why users perform certain searches even when the explicit goal looks clear. A query for “ergonomic desk chair” can carry a budget micro-intent (find a cheap one), an affluent micro-intent (find a premium one), an obstacle micro-intent (my back hurts), or an expert-validation micro-intent (which one do ergonomists actually recommend). The query text is identical. The content that satisfies each is different.

Each micro-intent adds granularity to the broader categories. Together they explain how emotional, situational, and social dynamics affect the way people search — and how search engines interpret relevance and satisfaction.

Jobs-to-be-done as a way of understanding implicit intent

The Jobs-to-be-Done (JTBD) framework reframes a query as: “When [situation], I want to [motivation/action], so I can [desired outcome].” It distinguishes between functional and emotional jobs and acknowledges related jobs — troubleshooting, deeper learning, confidence-building. JTBD is a useful complement to micro-intent because it forces you to think about the user’s underlying goal rather than the words they typed.

How search engines infer intent

Search engines don’t rely on keywords alone. They combine explicit signals, implicit cues, and behavioural patterns to interpret what a user really means and what kind of result is most likely to satisfy that meaning. The sophistication extends far beyond what SEOs can directly measure — but the patents make clear what’s being measured and how.

What Google looks at

According to multiple Google patents, query intent classification draws on the interaction between query-level data and behavioural data:

- Query patterns — length, syntax, entity or topic clustering

- Interactions — click patterns, dwell time, brand focus

- Refinements — adding, removing, or reordering words within a session

- Contextual factors — device type, location, time of day

- Content-type cues — explicit modifiers like “video,” “pictures,” or “under 10 minutes” that reveal preferred format

A Google patent on query intent inference describes how a consistent concentration of clicks on one domain signals navigational intent, while dispersed clicks across multiple results suggest informational intent. Longer dwell times or repeat interactions with particular pages indicate the document is satisfying the user’s need.

Sessions, sequences, and refinements

A separate patent on query sequence analysis within user sessions explains how Google examines the order and progression of queries to infer evolving intent. The system doesn’t look at a single query in isolation — it looks at the path a user takes across multiple refinements.

If someone searches “home gym equipment,” then “best beginner home gym,” then “buy foldable treadmill,” Google recognises the progression as a refinement chain reflecting transition from informational to transactional intent. These patterns let the system learn not just which pages satisfy a query, but which combinations of searches lead to satisfaction.

The patent describes the system identifying modifications — added or removed terms, reformulation frequency, and which refinements end the session. This data feeds machine learning models that continually update Google’s understanding of intent transitions across the search journey.

Content-type and format hints

Google’s systems also note explicit format indicators within a query. When a user specifies “with pictures,” “under 10 minutes,” or “video tutorial,” that’s a strong signal about content expectations and micro-intent. These cues help the ranking algorithm filter and prioritise content that matches both topic relevance and format relevance.

A “video” query may elevate YouTube results or short-form content. An “image” query may emphasise visual carousels or featured snippets with inline imagery.

Limits and ambiguity

Even with billions of data points, intent classification remains probabilistic, not absolute. The same query can express multiple legitimate intents depending on circumstance. “Apple” could be navigational (the brand), informational (the fruit), or transactional (the store).

Search engines handle this by maintaining multiple intent hypotheses simultaneously and testing which results generate stronger engagement. Ambiguity isn’t a flaw — it’s a fundamental feature of language. For SEOs, this means success often depends on designing content ecosystems that address overlapping intents rather than over-optimising for a single one.

Search intent in the AI search era

Intent inference works fundamentally differently in AI search, and that’s worth flagging directly.

In traditional search, intent shapes which results get ranked. Google retrieves a candidate set based on your query, then re-ranks that set using intent and behavioural signals. The query itself stays stable across users — different users see the same candidates in different orders.

In AI search, intent inference happens upstream of retrieval. When AI Mode or Perplexity expands a user’s query through fan-out, the sub-queries it generates reflect the system’s interpretation of what the user actually wants — and that interpretation is built from the user’s prior behaviour, memory, context, and signals as much as from the words typed. Two users with the same query but different inferred intents will trigger different fan-out queries, retrieve different documents, and get different synthesised answers.

I covered this in detail in How AI Search Personalizes Fan-Out Queries. The short version for SEO: intent matters more than ever, but it now operates at the query-generation level, not just the ranking level. Content that comprehensively covers an entity’s intent space — informational queries about it, transactional queries around it, comparative queries against alternatives — is content that gets retrieved across more user contexts. Content that targets a single narrow intent gets retrieved less.

There’s a related practical implication: AI systems are increasingly inferring persona signals from query phrasing and surrounding context. “Best budget running shoes for marathons” carries persona signal (budget-conscious, marathon-running) that the system can use to shape fan-out. Your content’s clarity about which personas it serves directly affects which fan-outs retrieve it. Persona-tagged content isn’t a nice-to-have anymore — it’s a retrieval signal.

Macro vs. micro intent and the funnel

Macro intent buckets are useful as a starting framework, but they oversimplify. Within each broad category exist countless micro-intents — the specific, contextual needs that actually drive a user to take action.

Olaf Kopp’s framing extends this thinking by emphasising the importance of addressing micro-moments across the entire customer journey, not just the point of purchase. His model includes after-sale and loyalty stages, recognising that intent continues even after a transaction. Users search for support, troubleshooting, ways to maximise value from a purchase — all part of an ongoing, cyclical intent pattern.

SparkToro’s research on how people use Google shows how this plays out in query distribution. Roughly 150 query terms account for about 15% of total search demand — and these are mostly navigational (“YouTube,” “Gmail,” “Amazon”). Single-word queries represent nearly 59% of all unique terms but only about 2.2% of total search demand. Short, generic searches are vastly outnumbered by longer, more specific ones.

Broken down by intent in the same study, the distribution was roughly 52% informational, 32% navigational, and 14% commercial. Exact proportions vary by dataset, but the pattern is clear: most searches begin as information-seeking, while transactional or commercial searches form a smaller, more targeted subset.

The implication for strategy is significant. Long-tail, context-rich queries carry a higher likelihood of conversion despite representing a smaller share of total volume. They reveal user readiness and situational context, giving you the opportunity to craft highly relevant experiences that meet intent at every stage.

Practical intent classification for semantic keyword research

The real impact comes from applying intent classification in practice. There are several complementary methods that bridge user psychology, search behaviour, and SERP data into a single structured framework.

1. Query-based, rule-based classification

The most direct way to identify intent is through query text analysis using rule-based classification to detect linguistic patterns.

At the simplest level, certain words reliably correlate with macro intent:

- Informational — “how,” “why,” “what,” “when,” “does,” “can”

- Commercial investigation — “vs,” “review,” “best,” “cheap,” “comparison”

- Transactional — “buy,” “sign up,” “book,” “order,” “request”

To move beyond generic categories, intent analysis needs industry-specific nuance. The way intent is expressed differs dramatically between verticals — a “beginner” modifier in an SEO query signals one thing, in a yoga query another, in a programming query something else again.

Beyond industry, modifiers help identify personas — a layer of micro-intent embedded in the query itself. “Affordable” suggests a budget-conscious user. “Premium” or “luxury” suggests an affluent one. “DIY” suggests a hands-on user; “professional” suggests someone who wants it done by an expert.

These linguistic indicators can scale through automated text parsing or simple rule filters in spreadsheets. Combined with keyword performance data, they let you segment keyword universes by probable user motivation.

2. SERP features as signals

The second classification method relies on SERP feature analysis — the visible clues search engines display to represent their own understanding of intent. This is a backward-looking approach: it reflects what Google currently believes satisfies a query, not necessarily what users originally meant.

Still, these signals are extremely valuable when triangulated with query data:

- Knowledge cards or one-boxes → navigational or “know simple” informational intent

- Product panels, reviews, shopping modules → commercial investigation or transactional intent

- Featured snippets and PAA boxes → informational intent

- Platform-dominant results (Wikipedia, G2, Amazon, Etsy, Shopify) → clues to content format expectations

- Device variance → certain features (maps, app packs, short videos) appear more often on mobile, hinting at situational micro-intents

Cataloguing SERP features at scale across a keyword list lets you quantify how Google interprets specific themes and validate or refine your rule-based classifications.

3. SERP and landing page analysis

A more advanced layer comes from content-type mapping — analysing the nature of the pages currently ranking. Each page type provides a clue about the intent Google has learned to associate with that query:

- Tutorials, guides, how-to articles → informational intent

- Product or category pages → transactional intent

- Comparisons, reviews, listicles → commercial investigation intent

- Podcasts, case studies, white papers → micro-intents like “expert validation” or “social proof”

Extend the analysis to your own site and competitors by identifying which intent each page currently serves and whether there are mismatches. If a page targets “best marketing automation platforms” but is structured as a company announcement, it’s mismatched against the commercial investigation intent dominating the SERP.

Aligning your content format and page type with the dominant intent signals in the SERP makes sure your pages match user expectations and Google’s learned understanding of satisfaction.

4. Build the dataset from your own and competitors’ queries

Build and iterate your own intent dataset. Pull keyword and query data from your own domain (Google Search Console) and from competitors (Ahrefs, Semrush). Then:

- Apply rule-based classification (macro + micro intent labels)

- Layer in SERP data (features, ranking domains, top page types)

- Cross-check intent assumptions by comparing how competitors satisfy the same query

- Map each intent category to the most appropriate content format on your site — blog, video, product page, guide, landing page

Repeating this across your entire keyword universe builds a living intent taxonomy that can feed content strategy, internal linking, and analytics segmentation. Maintained over time, this dataset becomes the foundation for semantic keyword clustering, content forecasting, and SERP opportunity modelling.

Implementation checklist

A condensed version of the workflow above:

- Define macro + micro taxonomy. Start with informational/navigational/transactional plus commercial investigation and local. Add micro-intents — emotional, contextual, persona-driven, format-driven, depth-driven.

- Create rule-based detectors. Phrase lists for each macro intent; industry-specific modifiers; persona and price/quality signals; troubleshooting and obstacle patterns (“can’t,” “won’t work,” “broken”).

- Mine and label your data. Pull queries from GSC and tools. Auto-label macro/micro intent from text patterns. Flag desired format (“video,” “pictures,” “under 10 minutes”). Capture context hints (device words, temporal cues).

- SERP read-back. For important keywords, capture features, ranking domains, and page types. Use this to validate or adjust your labels and to choose the right content format.

- Map to page types and content. Align intent to the correct page type on your site (resource hub, guide, comparison, product, local). Make sure on-page copy and structure reflect the micro-intent.

- Account for personas. Create variants or sections that speak to affluent vs. budget signals without diluting clarity.

- Close the loop. Monitor engagement (clicks, dwell, next-page paths) to refine labels and formats over time.

Turning search intent insights into action

Search intent isn’t a theoretical exercise. It’s the bridge between how users think and how content performs. Across this post, we’ve covered the full spectrum of intent — explicit (informational, navigational, transactional), hybrid (commercial, local), and implicit (emotional, contextual, persona-driven, environmental). Search engines infer these patterns by analysing queries, sessions, refinements, and behavioural data, building a multidimensional understanding of what users seek. AI search systems take this further, using inferred intent to shape the queries they generate before retrieval even begins.

The teams that operate well in this environment treat intent as a first-class signal — present in keyword research, content briefs, page design, internal linking, and analytics. The teams that don’t tend to over-target a single intent per page and underperform in feature-heavy SERPs where multiple intents overlap.

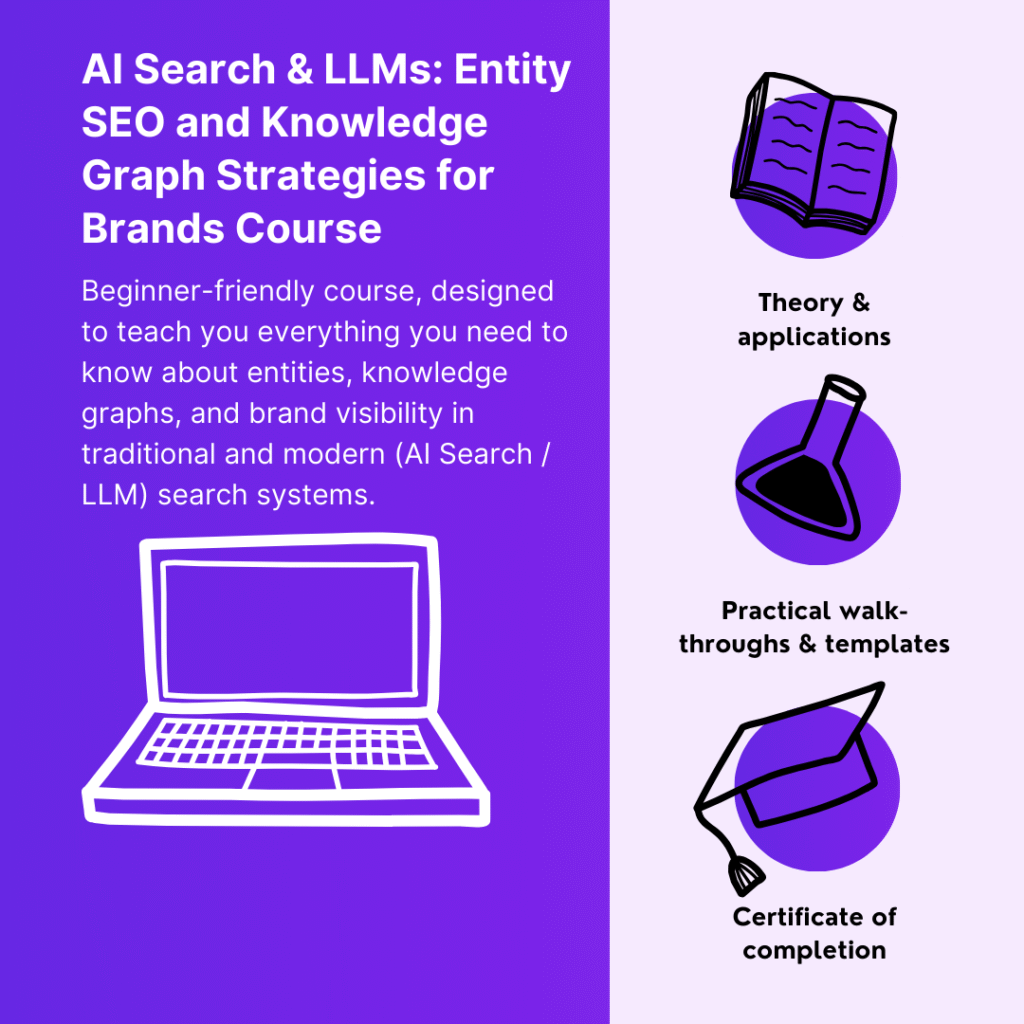

Continue your learning (MLforSEO)

This post covered what search intent is, the methods to classify it, and how AI search changes the calculation. The full implementation — including rule-based classifiers in Google Sheets, SERP-feature-driven intent classification at scale, AutoML approaches to building a custom intent classifier on your own data, persona-aware intent modelling, and integrating intent labels into your semantic keyword universe — is in the search intent classification module of the Semantic AI-Powered SEO Keyword Research course on MLforSEO.

Lazarina Stoy is a Digital Marketing Consultant with expertise in SEO, Machine Learning, and Data Science, and the founder of MLforSEO. Lazarina’s expertise lies in integrating marketing and technology to improve organic visibility strategies and implement process automation.

A University of Strathclyde alumna, her work spans across sectors like B2B, SaaS, and big tech, with notable projects for AWS, Extreme Networks, neo4j, Skyscanner, and other enterprises.

Lazarina champions marketing automation, by creating resources for SEO professionals and speaking at industry events globally on the significance of automation and machine learning in digital marketing. Her contributions to the field are recognized in publications like Search Engine Land, Wix, and Moz, to name a few.

As a mentor on GrowthMentor and a guest lecturer at the University of Strathclyde, Lazarina dedicates her efforts to education and empowerment within the industry.