Search is no longer a simple exchange between a user’s typed words and a list of matching documents. As AI-powered search systems reshape how information is retrieved and presented, a concept that used to be invisible has become central to modern SEO: synthetic queries.

Synthetic queries aren’t queries users type into a search box. They’re queries generated internally by search systems themselves to better interpret, expand, and satisfy a user’s underlying information need. Although they’re invisible to users, they directly influence which documents get retrieved, which sources get cited, and which brands or pages appear in AI-generated answers. In practice, modern SEO increasingly involves competing in searches no human ever typed.

This post covers what synthetic queries are, why they exist, how AI systems generate them, and why semantic coverage — not keyword density — now determines visibility. The aim is the intro-level understanding plus the practical lens for spotting where synthetic queries are shaping your visibility. The full implementation — simulating likely fan-out around target topics, measuring semantic coverage against fan-out expectations, distinguishing synthetic vs. user-initiated queries in your data — is its own substantial workflow with the actual templates and labs.

The shift from query-based to meaning-based retrieval

In traditional search, retrieval was relatively straightforward. A user typed a query, the search engine matched it to documents containing the same or closely related terms, and results were ranked by relevance and authority signals.

If someone searched “best running shoes for flat feet,” the system largely evaluated documents against those exact terms or simple variations. Ranking algorithms were complex, but the input query itself was fixed, human-generated, and transparent.

AI-powered search systems work differently. Platforms like Google AI Mode, Perplexity, ChatGPT search, and Claude with search enabled no longer treat the user’s query as the sole retrieval instruction. The typed query is a starting signal. The system interprets it, expands it, and generates multiple additional queries behind the scenes to explore the topic space more thoroughly.

For the same query about running shoes for flat feet, an AI system may internally ask about arch support, pronation control, biomechanical features, podiatrist recommendations, or comparisons between stability and motion-control shoes. Each of those internally generated prompts is a synthetic query. Together, they let the system retrieve information across multiple dimensions before synthesising a single answer.

This is what’s commonly called query fan-out, and it’s documented directly in Google’s patents. The Thematic Search patent describes how a single query can result in multiple sub-queries based on “sub-themes.” Their patent on generating query variants outlines how trained generative models create those variants in real time. Google’s User Embedding Models patent reveals how user-specific context shapes which sub-queries get generated for which users — the same query from different users can produce different fan-outs.

Why synthetic queries exist at all

Synthetic queries exist because human queries are often ambiguous, incomplete, or imprecise — and AI systems are designed to compensate.

Ambiguity. A short query like “python tutorial” could refer to a programming language, a snake species, or comedy related to Monty Python. Rather than guess, the system generates multiple interpretations internally and tests them. By exploring alternative meanings through synthetic queries, it determines which interpretation best aligns with contextual signals, user history, or behavioural patterns.

Semantic coverage. Users frequently describe problems in informal or non-technical language. “Why does my chest hurt when I breathe” doesn’t contain medical terminology, but it may relate to several clinically distinct conditions. By generating synthetic queries that reference recognised medical concepts, the system can retrieve more complete and accurate information than the original phrasing alone allows.

Entity understanding. Modern search systems are built around entities and their relationships. When someone searches for a person, organisation, or event, the system often expands the query to explore associated entities, historical context, roles, and attributes. This is how AI systems move beyond simple fact retrieval toward richer, contextualised answers.

Multi-dimensional questions. Many user queries are multi-dimensional without explicitly stating so. “Best city to live in 2026” implicitly involves cost of living, employment, safety, healthcare, education, and lifestyle preferences. Synthetic queries let AI systems decompose these hidden dimensions and research each independently before assembling a final response.

How AI systems generate synthetic queries

Under the hood, synthetic queries are produced through a combination of lexical and semantic techniques. These include synonym expansion, spelling correction, and stemming, plus embedding-based similarity search that identifies conceptually related terms. Large language models also decompose complex questions into smaller sub-questions, explore different facets (price, geography, audience), and reformulate queries based on language, tone, or contextual signals.

These mechanisms aren’t used in isolation. They’re layered together, with diversity controls and deduplication applied to ensure broad yet relevant coverage of the topic space. Different platforms also implement them differently — some lean more heavily on user context (which makes their fan-out more personalised), others apply broader topic decomposition that’s less user-specific. I covered the platform-by-platform differences in detail in How AI Search Personalizes Fan-Out Queries, with the spectrum running from Perplexity’s shallow session-based inference to Copilot’s deep cross-product context.

What this means in practice

The rise of synthetic queries has dramatically increased the total number of searches happening behind the scenes. Automated SEO tools polling SERPs, richer conversational prompts in AI interfaces, and AI agents that autonomously research and verify information all contribute. A single user action can now trigger dozens or even hundreds of synthetic retrieval queries.

This has profound implications for how content is discovered. Visibility is no longer determined by ranking for a single, well-defined keyword. It depends on how well content aligns with the broader semantic space AI systems explore through synthetic queries.

This is what I’ve called the shift from optimising for queries to optimising for retrieval — and the dynamics play out very differently across the AI search ecosystem. Each platform implements fan-out differently and uses different signals to personalise it, but the core mechanic — generate sub-queries, retrieve across them, synthesise — is consistent.

How synthetic queries are commonly categorised

Synthetic queries take many forms, but they tend to fall into a few recurring patterns:

- Rephrasings — simple reformulations of the original query designed to capture similar intent in different language

- Implicit follow-ups — questions a user is likely to care about but didn’t explicitly ask (“what should I look for in X,” “how do I avoid common mistakes with Y”)

- Comparative — exploring alternatives and trade-offs (“X vs. Y,” “alternatives to Z”)

- Entity-focused — anchoring retrieval around specific people, brands, or organisations mentioned in the query

- Exploratory — investigating adjacent topics (“background on X,” “history of Y”)

- Contextual — incorporating location, time, or personalisation signals

- Clarification — testing different interpretations of a vague request

Together, these patterns let AI systems map a topic far more comprehensively than traditional keyword matching ever could.

Why synthetic queries matter for semantic SEO

For SEO practitioners, the most important implication: ranking for the original user query no longer guarantees visibility in AI-generated answers. A page may rank first for a headline keyword and still be excluded if it fails to address the synthetic queries the AI system deems relevant.

This places far greater emphasis on content depth and semantic completeness. Synthetic queries probe multiple facets of a topic, so content that only answers a narrow question is less likely to be retrieved than content that anticipates related needs, explains underlying concepts, and connects relevant entities. Keyword density becomes far less important than conceptual coverage.

Entity usage becomes central. Because AI systems rely heavily on entity recognition to generate synthetic queries, content that clearly identifies and contextualises relevant entities has more retrieval opportunities. Pages that explicitly explain relationships between concepts are more likely to surface across a wider range of synthetic query variations.

A related implication that often gets overlooked: synthetic queries create what’s effectively a long-tail explosion. Where the user query is the head, the synthetic queries are the tail — and each piece of content competes across the entire tail, not just the head. A piece of content that ranks well for the head but doesn’t address any of the tail variants captures the head traffic only. A piece that’s structured to address the tail captures the head plus everything fanned out from it.

Understanding query fan-out through content analysis

Instead of treating synthetic queries as an abstract system behaviour, it’s often easier to understand them by working backwards from content and language patterns.

Take a guide on training a golden retriever puppy. A minimal article that lists a handful of basic commands technically answers a narrow query, but it leaves large parts of the underlying information space unexplored. A more effective piece naturally expands into breed-specific temperament, early socialisation windows, common behavioural issues, different training methodologies, and typical problems owners encounter during the first year.

From an AI system’s perspective, this expanded coverage isn’t accidental. Each additional concept corresponds to a likely synthetic query the system generates while interpreting the original user request. Questions about biting, attention span, or reinforcement techniques are rarely typed verbatim, but they’re highly predictable once the system understands the entity (golden retriever) and the implied life stage (puppy).

This is where recognising synthetic queries becomes a practical SEO skill. While you can’t definitively label any specific query as synthetic versus user-generated, certain patterns consistently distinguish them.

Synthetic queries tend to be more explicit, more formal, and more precise in how they express intent. They often surface entities, attributes, or comparisons that users imply rather than state directly. By contrast, user queries are typically shorter, more conversational, and less structured.

By examining how a topic naturally expands when you aim to answer it well, you can often infer the kinds of synthetic queries an AI system is likely generating. Content that anticipates these expansions — by addressing implied questions, clarifying entity relationships, and covering adjacent concerns — aligns more closely with how modern search systems retrieve information.

Practical implications for SEO strategy

A few specific shifts to make in how you research and produce content.

Plan for fan-out coverage, not single-keyword targeting. For each significant topic, write down the synthetic queries you’d expect a thorough AI system to generate around it. Cover them in your content. Tools that simulate fan-out (there are several that have appeared since 2025) can help you validate your guesses against likely sub-queries.

Increase entity density. AI fan-out is anchored on entities. Content that clearly names, contextualises, and relates entities is content that gets retrieved across more sub-queries. Use entity extraction tools on your own content to check whether the entities you intend to cover are actually being recognised as central.

Cover implicit follow-ups explicitly. A page about a topic should answer the obvious question, the comparison question, the alternatives question, the gotchas question, and the next-step question. These are the standard implicit follow-ups synthetic queries probe.

Structure for retrieval, not just reading. AI systems retrieve at the chunk level. Self-contained sections with clear headings, summary paragraphs, and explicit entity references are more retrievable than long unbroken prose. Optimise for the retrieved chunk being usable in isolation — because that’s what often happens to it.

Watch competitor coverage, not just competitor rankings. A competitor outranking you for the headline keyword matters less than a competitor having broader semantic coverage that gets retrieved across more fan-out variants. Run entity analysis on competitor content and look at coverage breadth, not just word count.

Originate where you can. Original data, proprietary research, and specific named entities are structurally more cite-worthy than generic explainers. AI systems compositing answers across multiple sources tend to cite the source that contributed something specific — a number, a definition, a perspective — over the source that contributed something generic. If your content is forgettable, it’s also unciteable.

A word on measuring fan-out exposure

One of the practical challenges with synthetic queries is that you can’t directly see them. They’re internal to the AI system. So how do you know whether your content is performing well across fan-out variants?

The honest answer at the intro level is: imperfectly. The current measurement landscape includes:

- AI citation tracking tools (multiple have emerged through 2025-2026) that monitor whether your domain gets cited in AI Overviews, ChatGPT, Perplexity, etc., for specific query sets

- Manual prompt testing — asking AI systems your target queries and noting which sources they cite

- Coverage simulators that approximate likely fan-out for a given query and let you check whether your content addresses those variants

- Server-side referral data — some AI systems do send referral traffic that you can identify and trend

None of these gives you a clean equivalent of the rank-tracking data SEOs are used to. The measurement infrastructure for AI search is still maturing. Working with imperfect signals is part of the territory right now, and the teams that build the measurement habit now will have far better visibility a year from now than teams that wait for the perfect tool.

Optimise for retrieval, not just queries

Synthetic queries represent a fundamental change in how search systems operate. Optimisation is no longer about matching the exact words users type — it’s about anticipating the questions AI systems need to ask in order to understand intent. Semantic SEO, in this context, is the mechanism through which content becomes retrievable and visible in an AI-mediated search environment.

The good news: this isn’t a separate “AI SEO” discipline. It’s the same semantic keyword research work that has been advocated for years — entity coverage, attribute mapping, query path modelling, intent classification — applied with awareness that retrieval coverage now determines visibility, not just ranking position. Teams that already operate semantically have a head start. Teams that don’t are accumulating debt in a keyword-first content programme that’s going to need restructuring.

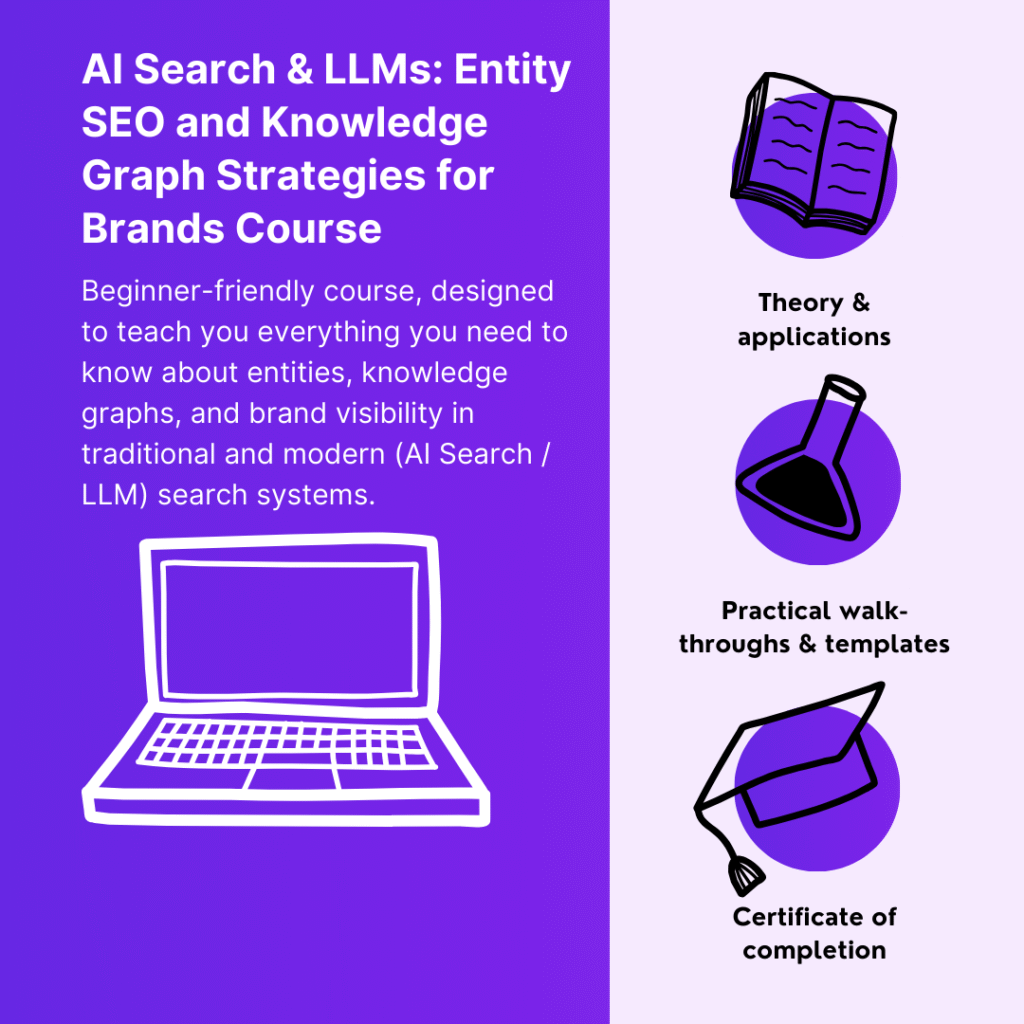

Continue your learning (MLforSEO)

This post covered what synthetic queries are and why they matter. The full implementation — including a hands-on practical lab on distinguishing between synthetic and user-initiated queries in your own keyword data, methods for simulating likely fan-out around your target topics, frameworks for measuring semantic coverage against fan-out expectations, and integration with the rest of the semantic keyword research toolkit — is in the Semantic AI-Powered SEO Keyword Research course on MLforSEO. The lesson on synthetic queries breaks down how modern search systems generate and use query fan-outs and how this changes the way keyword research and content strategy should be approached.

Lazarina Stoy is a Digital Marketing Consultant with expertise in SEO, Machine Learning, and Data Science, and the founder of MLforSEO. Lazarina’s expertise lies in integrating marketing and technology to improve organic visibility strategies and implement process automation.

A University of Strathclyde alumna, her work spans across sectors like B2B, SaaS, and big tech, with notable projects for AWS, Extreme Networks, neo4j, Skyscanner, and other enterprises.

Lazarina champions marketing automation, by creating resources for SEO professionals and speaking at industry events globally on the significance of automation and machine learning in digital marketing. Her contributions to the field are recognized in publications like Search Engine Land, Wix, and Moz, to name a few.

As a mentor on GrowthMentor and a guest lecturer at the University of Strathclyde, Lazarina dedicates her efforts to education and empowerment within the industry.